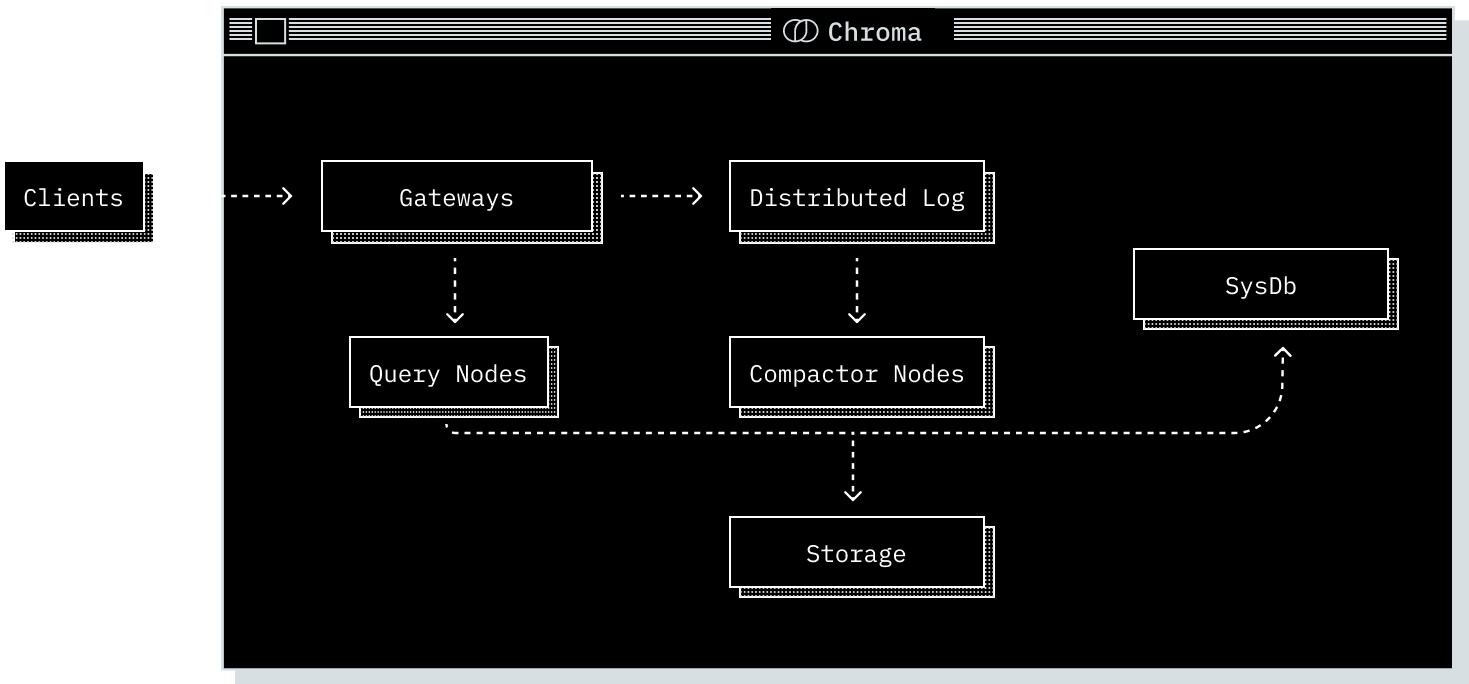

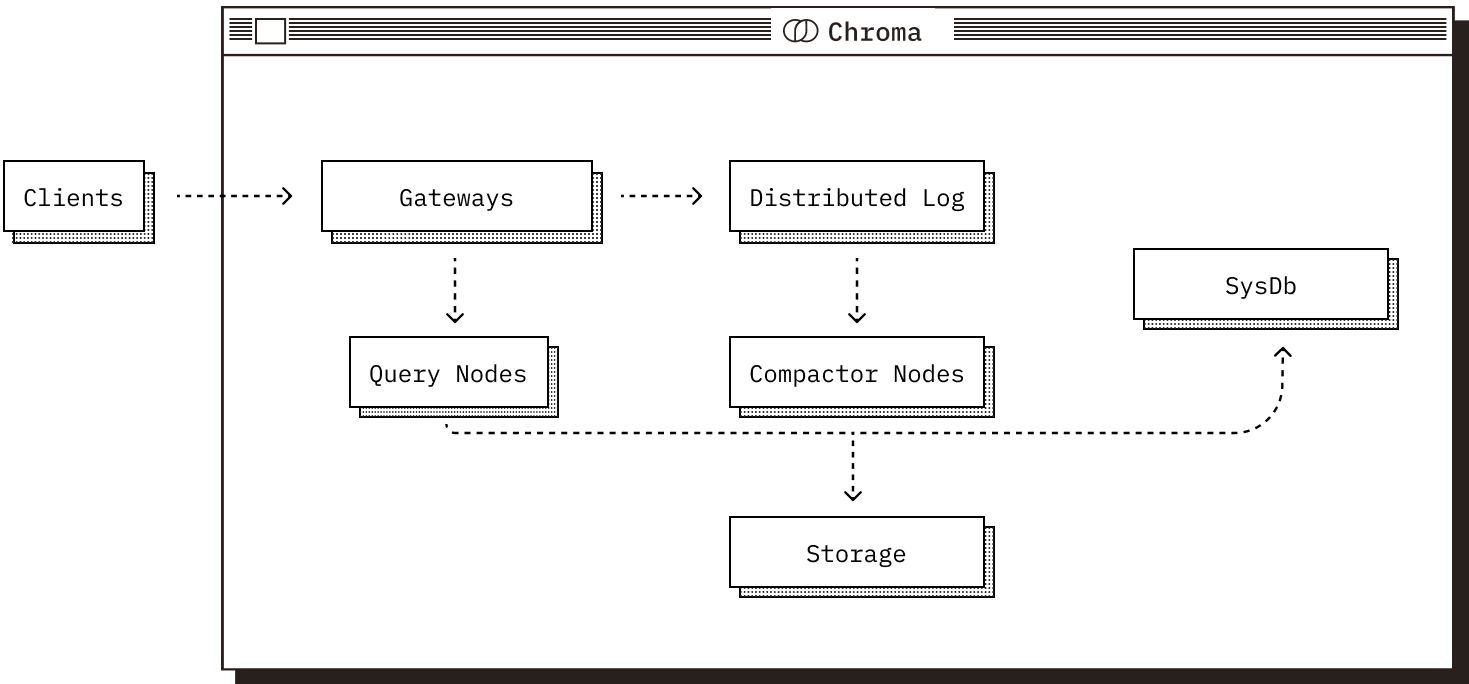

Distributed Chroma is designed for large-scale production workloads. Its components run as independent services so the system can scale horizontally while keeping a consistent API for clients.Documentation Index

Fetch the complete documentation index at: https://docs.trychroma.com/llms.txt

Use this file to discover all available pages before exploring further.

Core Components

Regardless of deployment mode, Chroma is composed of five core components. Each plays a distinct role in the system and operates over the shared Chroma data model.

The Gateway

The gateway is the entrypoint for client traffic.- Exposes a consistent API across all deployment modes.

- Handles authentication, rate limiting, quota management, and request validation.

- Routes requests to downstream services.

The Log

The log is Chroma’s write-ahead log.- Records writes before they are acknowledged to clients.

- Ensures atomicity across multi-record writes.

- Provides durability and replay semantics.

The Query Executor

The query executor is responsible for all read operations.- Runs vector similarity, full-text, and metadata search.

- Maintains a mix of in-memory and on-disk indexes.

- Coordinates with the log to serve consistent results.

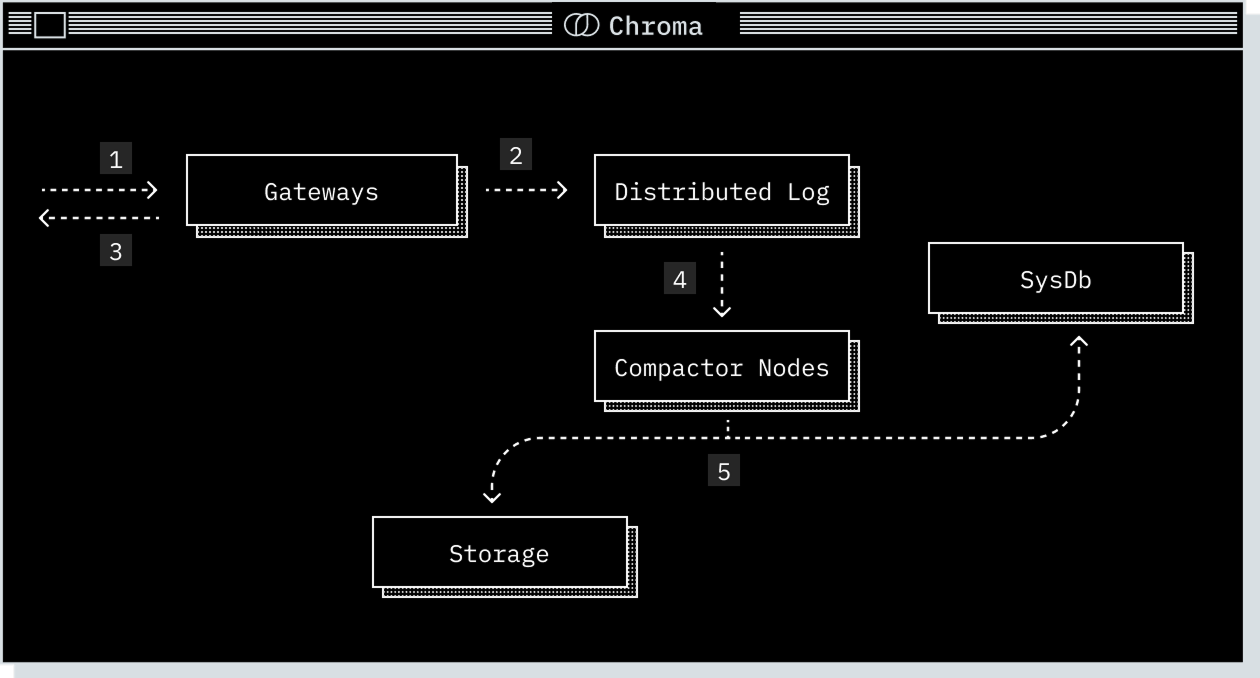

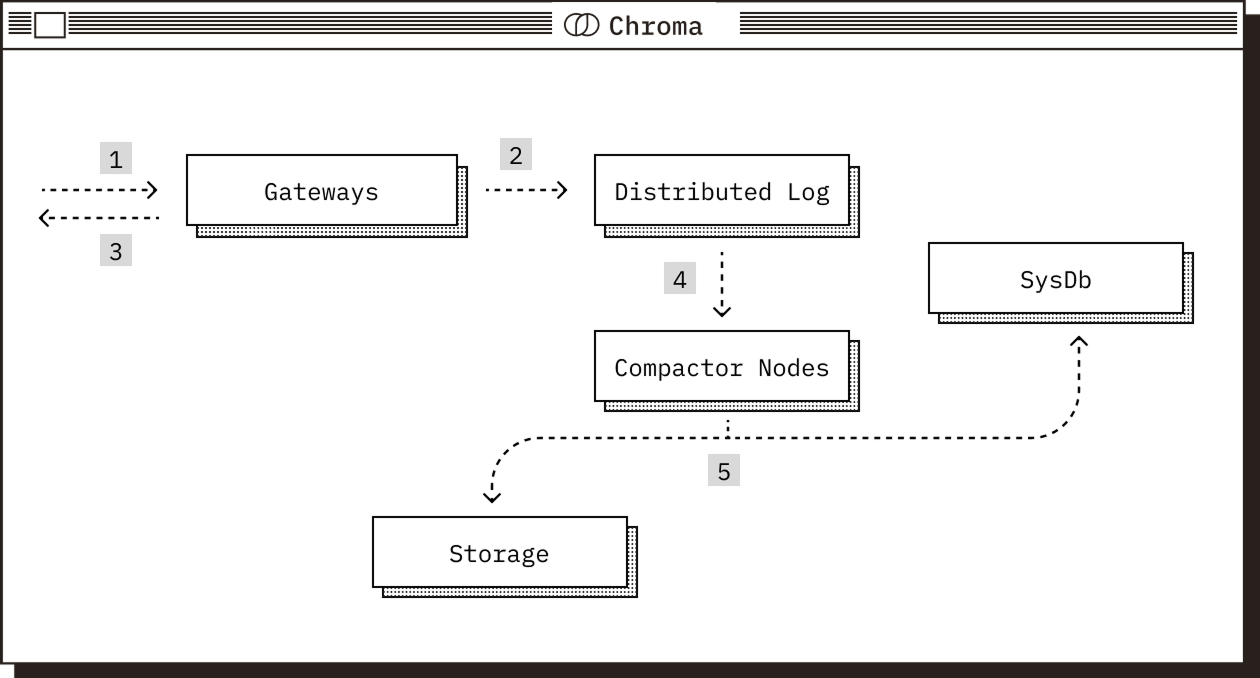

The Compactor

The compactor periodically builds and maintains indexes.- Reads from the log and produces updated vector, full-text, and metadata indexes.

- Writes materialized index data to storage.

- Updates the system database with metadata about new index versions.

The System Database

The system database is Chroma’s internal catalog.- Tracks tenants, databases, collections, and their metadata.

- Stores cluster metadata in distributed deployments.

- Is backed by a SQL database.

Runtime And Storage

In distributed mode, Chroma’s components are deployed independently.- The log and built indexes are stored in cloud object storage.

- The system catalog is backed by a SQL database.

- Services use local SSDs as caches to reduce object storage latency and cost.

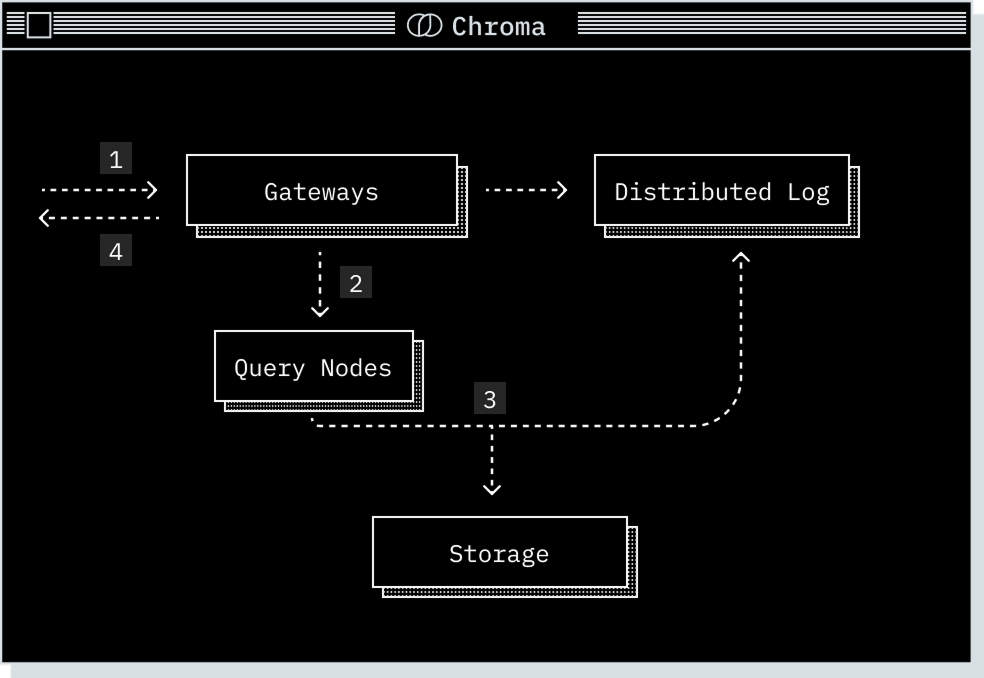

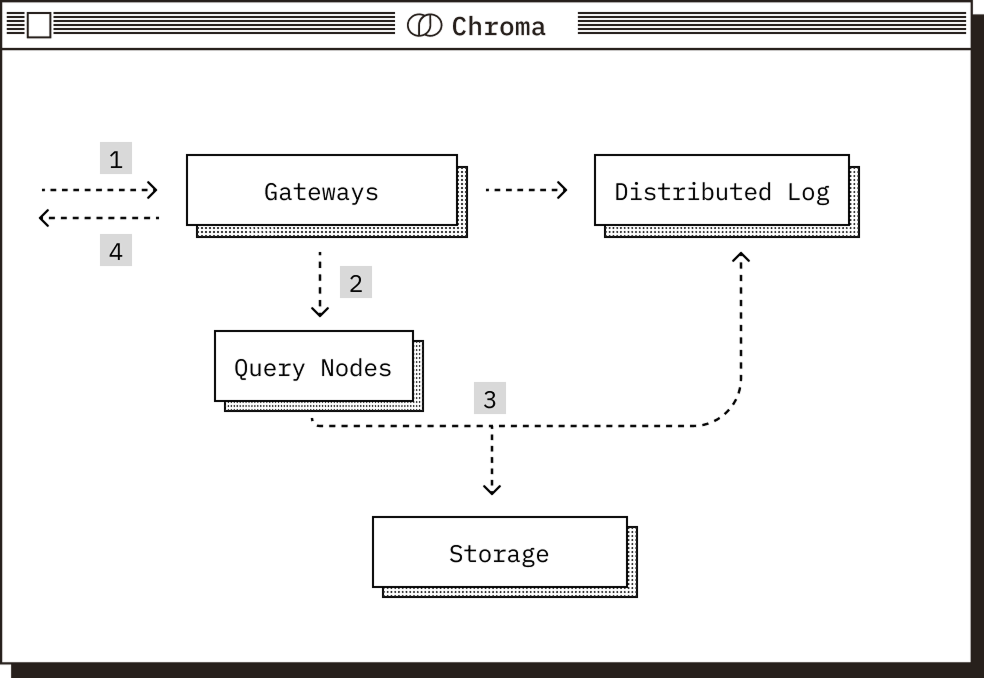

Read Path

A request arrives at the gateway, where it is authenticated, checked against quota limits, rate limited, and transformed into a logical plan.

The gateway routes the plan to the relevant query executor. In distributed Chroma, rendezvous hashing on the collection ID is used to route the query to the correct nodes and preserve cache coherence.

The query executor transforms the logical plan into a physical plan, reads from its storage layer, and consults the log to serve a consistent result.

Write Path

The compactor periodically reads from the log and builds new vector, full-text, and metadata index versions.